Phase 2 is done

Mnemonic is being built in five phases. Phase 1 was a single local service; intentionally simple, just enough to validate the concept end-to-end. Phase 2 is the planned decomposition into separate services. That's what's landed over the last few weeks.

Phase 1: the baseline¶

One service, two databases. You talked to it either through MCP (from Claude Code) or through a REST API (from scripts or admin tools).

---

config:

theme: redux

layout: dagre

---

flowchart TB

n3["Admin<br>bash - cURL|HTTPie"] -- REST --> n2["Mnemonic"]

n2 --> n7["PostgreSQL +<br>PGVector"] & n8["Neo4j"]

n9(["Claude Code | Codex"]) -- MCP --> n2

n3@{ shape: subproc}

n7@{ shape: cyl}

n8@{ shape: cyl}Simple, good for validation. Stand it up with docker compose up and start testing MCP searches and REST writes immediately.

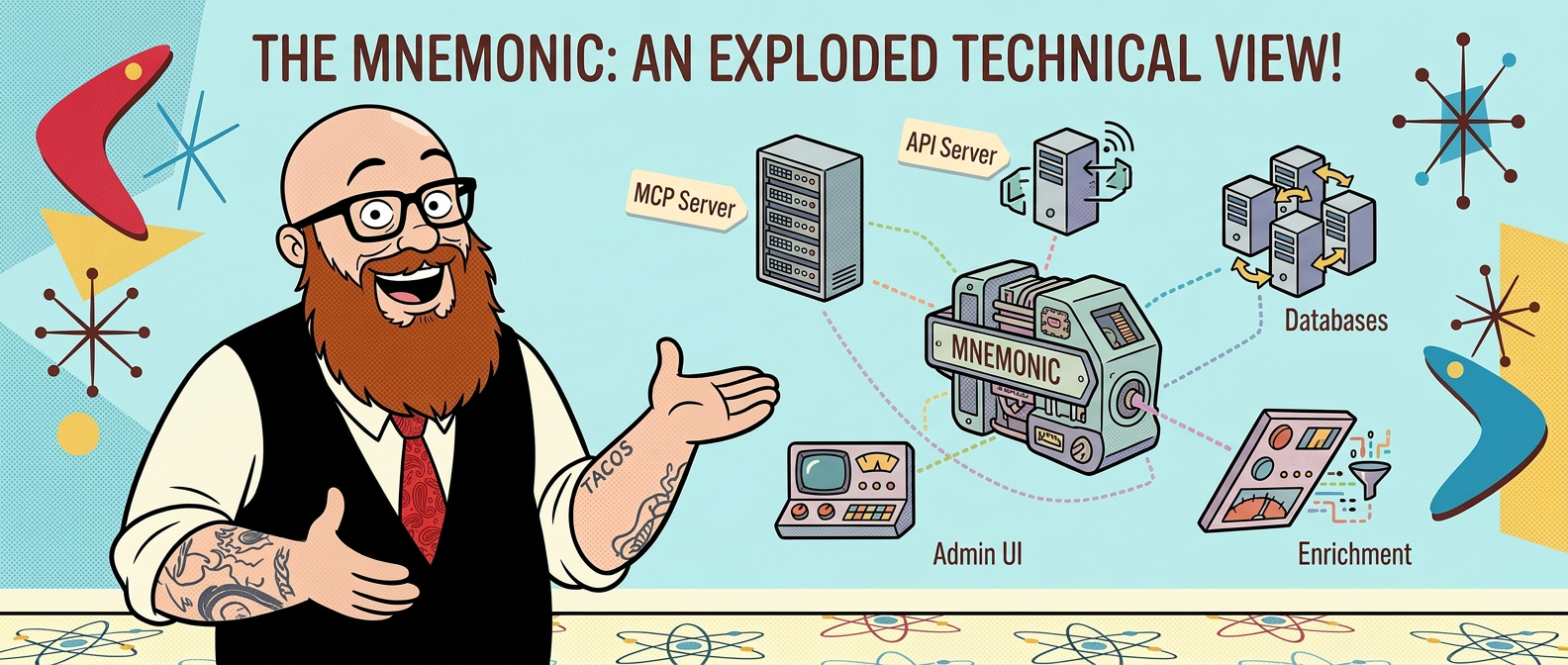

Phase 2: the split¶

Enrichment was always going to be async. When you POST a pattern, Mnemonic generates a semantic embedding and writes to both PostgreSQL and Neo4j; doing that synchronously would make API clients wait on work that has no reason to block them. The design always called for a job queue between the API and the enricher.

Once that seam is there, the rest follows naturally. The MCP server is purely read-only; it has no business knowing anything about enrichment queues. The admin CLI and seed scripts don't belong in a server binary. Four concerns, four services; plus a shared database layer used by both the API and the enricher. Five repos.

What phase 2 looks like¶

---

config:

theme: redux

layout: dagre

---

flowchart TB

claude["Claude Code"] -->|MCP| mcp_server["mnemonic MCP server"]

admin["admin tools"] -->|REST| admin_api["mnemonic admin API"]

admin_api --> pg[("PostgreSQL + PGVector")]

admin_api --> queue[("RabbitMQ enrichment job queue")]

queue --> enricher["enricher"]

enricher --> pg

enricher --> neo4j[("Neo4j")]

mcp_server -->|read only| pg

mcp_server -->|read only| neo4j| Repo | What it does |

|---|---|

| mnemonic | MCP server; read-only, no writes |

| mnemonic-api | Admin REST API; accepts writes, publishes enrichment jobs |

| mnemonic-enricher | Consumes the job queue, runs enrichment, writes to both databases |

| mnemonic-dbs | Shared database layer used by both the API and the enricher |

| mnemonic-admin | CLI and scripts for managing content |

The MCP interface is clean now. It has one job and knows nothing about what happens when you add a pattern.

More moving parts than a single binary, but that's the point; each service has a single responsibility and can be scaled or replaced independently.

Eating our own dogfood

This work was done using Mnemonic itself. The Cognee setup I'd been running is gone; Mnemonic replaced it. It's been holding up well enough that I'm comfortable using it as my daily driver while I build the rest of it.

The next steps are to add authentication and authorization. I'll use Envoy and OPA for that. Then, finally, move it all to the cloud.

Related: Mnemonic project page